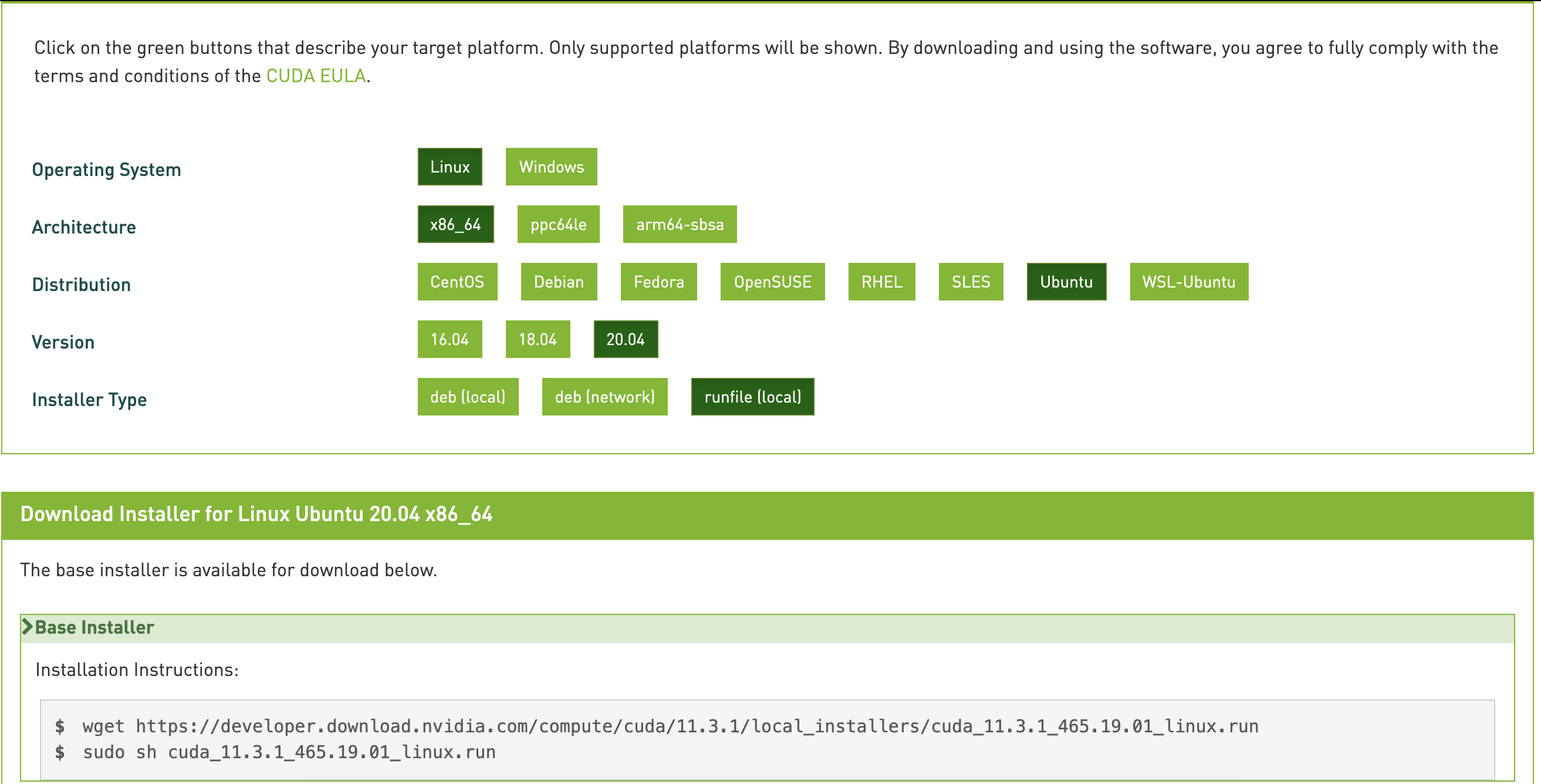

Nvidia cuda toolkit 11.21/10/2024 $ sudo yum install nvidia-driver-latest-dkms # RHEL7 When a new version is available, use the following commands to upgrade the driver: The cuda-drivers package points to the latest driver release available in the CUDA repository. The cuda-cross- packages can also be upgraded in the same manner. $ sudo zypper install cuda # OpenSUSE & SLES When a new version is available, use the following commands to upgrade the toolkit and driver: The cuda package points to the latest stable release of the CUDA Toolkit. $ cat /var/lib/apt/lists/*cuda*Packages | grep "Package:" # Ubuntu $ zypper packages -r cuda # OpenSUSE & SLES $ dnf -disablerepo="*" -enablerepo="cuda*" list available # Fedora $ yum -disablerepo="*" -enablerepo="cuda*" list available # RedHat The list of available packages be can obtained with: The packages installed by the packages above can also be installed individually by specifying their names explicitly. Installed to enable the cross compilation of driverĭo not install the native display driver. Of the target architecture's display driver package are also On supported platforms, the cuda-cross-aarch64 andĪll the packages required for cross-platform development toĪRMv8 and POWER8, respectively. It also includes the NVIDIA driver package. That includes the compiler, the debugger, the profiler, the math libraries, and so on.įor x86_64 patforms, this also include Nsight Eclipse Edition and the visual profilers. The cuda package installs all the available packages for native developments. This package will install the full set of other CUDA packages required for native development and should cover most scenarios. The recommended installation package is the cuda package. Most likely need manual tweaking for systems with a non-trivial GPU nf file is present, this functionality will beĭisabled and the driver may not work. The driver relies on an automatically generated nf file $ subscription-manager repos -enable=codeready-builder-for-rhel-8-ppc64le-rpms $ subscription-manager repos -enable=rhel-8-for-ppc64le-baseos-rpms $ subscription-manager repos -enable=rhel-8-for-ppc64le-appstream-rpms $ subscription-manager repos -enable=codeready-builder-for-rhel-8-x86_64-rpms $ subscription-manager repos -enable=rhel-8-for-x86_64-baseos-rpms On x86_64 systems: $ subscription-manager repos -enable=rhel-8-for-x86_64-appstream-rpms.The following steps to enable optional repositories. (4) Only Tesla V100 and T4 GPUs are supported for CUDA 11.2 on Arm64 ( aarch64) POWER9 ( ppc64le).

(3) Minor versions of the following compilers listed: of GCC, ICC, PGI and XLC, as host compilers for nvcc are supported. Newer GCC toolchains are available with the On distributions such as RHEL 7 or CentOS 7 that may use an older GCC toolchain by default, it is recommended to use (2) Note that starting with CUDA 11.0, the minimum recommended GCC compiler is at least GCC 5 due to C++11 requirements inĬUB. (1) The following notes apply to the kernel versions supported by CUDA:įor specific kernel versions supported on Red Hat Enterprise Linux (RHEL),įor a list of kernel versions including the release dates for SUSE Linux Enterprise Server (SLES) isįor Ubuntu LTS on x86-64, both the HWE kernel (e.g. This guide will show you how to install and check the correct operation of the CUDA development tools. The on-chip shared memory allows parallel tasks running on theseĬores to share data without sending it over the system memory bus. Resources including a register file and a shared memory. This configuration also allows simultaneousĬomputation on the CPU and GPU without contention for memory resources.ĬUDA-capable GPUs have hundreds of cores that can collectively run thousands of computing threads.

The CPU and GPU are treated as separate devices that have their own memory spaces. As such, CUDA can be incrementally applied to existing applications. The CPU, and parallel portions are offloaded to the GPU. Serial portions of applications are run on

Support heterogeneous computation where applications use both the CPU and GPU.With CUDA C/C++, programmers can focus on the task of parallelization of the algorithms rather than Provide a small set of extensions to standard programming languages, like C, that enable a straightforward implementation.CUDA was developed with several design goals in mind:

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed